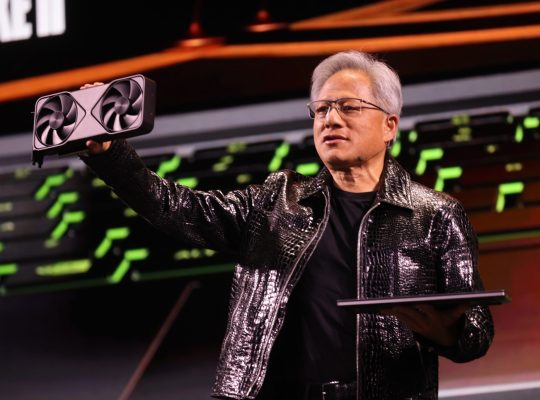

NVIDIA continues to solidify its position as a dominant force in cloud AI solutions in 2025, offering a comprehensive suite of platforms, software, and services that cater to the most demanding enterprise and research needs. Their strategy revolves around providing end-to-end AI factories, accessible across various cloud environments, and continuously optimizing for performance, scalability, and ease of use.

Here are the best cloud AI solutions from NVIDIA in 2025:

1. NVIDIA DGX Cloud: The Premier AI Supercomputing Platform

NVIDIA DGX Cloud remains at the pinnacle of NVIDIA’s cloud AI offerings. It’s a fully managed, end-to-end AI platform that provides access to the most advanced NVIDIA DGX systems (featuring the latest Blackwell GPUs and Grace Blackwell Superchips) hosted on leading public cloud providers. In 2025, DGX Cloud has further expanded its reach and capabilities:

Multi-Cloud Availability: DGX Cloud is now available across an even broader range of major cloud providers, including Microsoft Azure, Google Cloud, Oracle Cloud Infrastructure (OCI), and as of early 2025, Amazon Web Services (AWS). This multi-cloud strategy gives enterprises unparalleled flexibility to leverage NVIDIA’s supercomputing power within their preferred cloud ecosystem.

Blackwell Integration: With the Blackwell architecture in full swing, DGX Cloud instances are equipped with the latest Blackwell GPUs and Grace Blackwell Superchips, delivering unprecedented performance for large-scale AI training workloads, including those with hundreds of billions of parameters.

DGX Cloud Lepton: A significant announcement in 2025, DGX Cloud Lepton connects developers with tens of thousands of GPUs from a global network of NVIDIA Cloud Partners (NCPs). This compute marketplace provides flexible access to GPU capacity, supporting both on-demand and long-term computing needs, crucial for strategic and sovereign AI operational requirements. It integrates seamlessly with NVIDIA’s software stack like NIM and NeMo.

Seamless Integration with NVIDIA Software Stack: DGX Cloud is deeply integrated with NVIDIA’s extensive AI software ecosystem, including NVIDIA AI Enterprise, NeMo, and NIM microservices, ensuring optimized performance and a streamlined development experience.

2. NVIDIA AI Enterprise: The Software Backbone for Cloud AI

NVIDIA AI Enterprise is not a cloud itself, but it’s the cloud-native software suite that powers the best NVIDIA AI experiences in the cloud. In 2025, its features have been significantly enhanced to deliver optimized performance, robust security, and stability for production AI deployments:

Optimized Microservices (NIM): NVIDIA NIM (NVIDIA Inference Microservices) are a game-changer for deploying AI models in the cloud. These easy-to-use microservices accelerate the deployment of foundation models on any cloud or data center, providing production-grade runtimes with ongoing security updates and stable APIs, making it much easier for enterprises to transition from prototype to production.

Comprehensive Application Layer: NVIDIA AI Enterprise provides a rich application layer with NVIDIA-built and optimized AI frameworks, pre-trained models, and SDKs. This includes:

Agentic AI Blueprints: Designed to help developers build and deploy sophisticated AI agents for various enterprise workflows.

Generative AI Tools: Support for building and customizing large language models (LLMs), vision language models (VLMs), and other generative AI applications.

Data Science and Machine Learning Libraries: Optimized libraries for data preprocessing, data ingestion, and accelerating AI workloads.

Robust Infrastructure Layer: The platform includes essential infrastructure software like GPU and networking drivers, Kubernetes operators for managing GPU and networking in containers, and cluster management software for scaling AI applications.

Focus on Enterprise Readiness: NVIDIA AI Enterprise emphasizes enterprise-grade security, support, and stability, addressing critical concerns for businesses deploying AI at scale. It offers different software branches (Production, Feature, Long-Term Support) to cater to varying enterprise needs for stability and access to the latest features.

3. NVIDIA NeMo: Building and Deploying Custom Generative AI

NVIDIA NeMo is an end-to-end platform specifically designed for developing custom generative AI, including LLMs, multimodal models, and speech AI. In 2025, NeMo’s cloud capabilities are more robust than ever:

Cloud-Native Framework: NeMo is built for scalability in cloud environments, supporting accelerated training and customization of large-scale generative AI models.

NeMo Curator: This service is critical for cloud AI, enabling the processing of text, image, and video data at scale to improve generative AI model accuracy. It also provides pre-built pipelines for generating synthetic data, which is invaluable for augmenting datasets in the cloud.

NeMo Customizer: A high-performance, scalable microservice that simplifies the fine-tuning and alignment of LLMs for domain-specific use cases, making it easier to adapt generative AI for various industries directly in the cloud.

NeMo Evaluator & Retriever: These microservices provide tools for assessing generative AI models and seamlessly retrieving information, crucial for building robust RAG (Retrieval Augmented Generation) applications in the cloud with high accuracy and data privacy.

Integration with NIM: NeMo models can be easily deployed as NVIDIA NIM microservices, ensuring secure, reliable, and high-performance inference across clouds.

Support for Sovereign LLMs: NVIDIA is actively partnering with European cloud providers and model builders to optimize sovereign LLMs using NeMo model-building techniques. These models are designed to be deployed as NVIDIA NIM microservices on regional cloud infrastructure, reflecting local languages and culture while ensuring data sovereignty.

4. NVIDIA BioNeMo & Omniverse Cloud: Specialized Cloud AI

Beyond general-purpose AI, NVIDIA offers specialized cloud AI solutions for specific industries:

NVIDIA BioNeMo: This AI-driven platform is tailored for life sciences research and discovery in the cloud. It accelerates drug discovery and genomic sequencing by providing pre-trained models and tools for molecular dynamics, protein structure prediction, and more, all accessible as cloud services.

NVIDIA Omniverse Cloud: Integrating advanced simulation and AI into complex 3D workflows, Omniverse Cloud enables collaborative 3D design, digital twin development, and robotic simulation in the cloud. It’s crucial for industries like manufacturing, architecture, engineering, and construction, allowing for real-time, physically accurate simulations powered by cloud AI.

Why NVIDIA’s Cloud AI Solutions Stand Out in 2025:

Full-Stack Optimization: NVIDIA’s strength lies in its integrated hardware (GPUs) and software (CUDA, cuDNN, AI Enterprise, NeMo, NIM) stack, which is optimized for peak AI performance in the cloud.

Scalability and Performance: Leveraging DGX Cloud and Blackwell architecture, NVIDIA provides unparalleled scalability for training and deploying even the largest AI models.

Enterprise-Grade Focus: With NVIDIA AI Enterprise and NIM, there’s a strong emphasis on production readiness, security, and ease of deployment for complex enterprise AI workloads.

Accessibility and Flexibility: Through DGX Cloud Lepton and partnerships with major cloud providers, NVIDIA is making its powerful AI capabilities more accessible to a wider range of developers and businesses, while also supporting hybrid cloud strategies.

Pioneering “Physical AI”: While many cloud AI solutions focus on digital data, NVIDIA’s advancements in platforms like Cosmos and Isaac GR00T, supported by cloud infrastructure, are laying the groundwork for “physical AI” – intelligent systems that interact with the real world, from robotics to autonomous vehicles.

In 2025, NVIDIA’s cloud AI solutions are not just about raw compute power; they’re about providing a comprehensive, integrated, and optimized ecosystem that empowers enterprises to build, deploy, and scale cutting-edge AI applications with greater efficiency, security, and speed.