NVIDIA’s Blackwell GPU architecture represents a monumental leap in performance for deep learning and AI, designed specifically to tackle the ever-growing demands of large language models (LLMs) and generative AI. It achieves this through a combination of significant architectural innovations and raw computational power.

Here’s a breakdown of how Blackwell GPUs improve deep learning and AI performance:

1. Unprecedented Raw Compute Power

- Massive Transistor Count: Blackwell GPUs, particularly the B200, pack an astounding 208 billion transistors. This sheer density allows for more processing units and more complex circuitry dedicated to AI workloads.

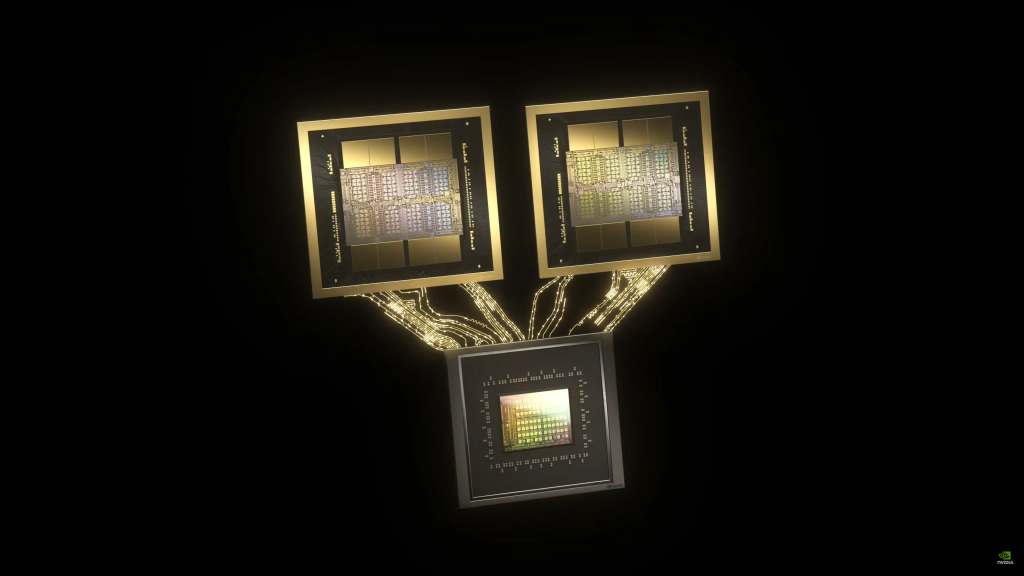

- Dual-Die Design with NV-HBI: Instead of a single monolithic die, the B200 comprises two large GPU dies connected by a high-speed, 10 TB/s chip-to-chip NV-High Bandwidth Interface (NV-HBI) based on NVLink 7. This allows the two dies to act as a single, unified GPU with full cache coherency, effectively doubling the computational resources within a single package.

- Next-Generation Tensor Cores: Blackwell features fifth-generation Tensor Cores specifically optimized for AI computation. These cores offer significantly higher throughput for matrix operations, which are the backbone of deep learning. For example, a single B200 can achieve 4.5 petaFLOPS of tensor processing in FP16/BF16, and up to 9 petaFLOPS in FP8, more than doubling the performance of the H100.

2. Revolutionary Precision Formats for AI

FP4 and MXFP4/MXFP6 Support: Blackwell introduces native support for new 4-bit and 6-bit floating-point formats (FP4, MXFP4, MXFP6). This is a game-changer for AI inference, allowing models to run with significantly less memory and higher throughput while maintaining accuracy.

- Doubled Effective Throughput: FP4 mode can double the effective throughput and model size a GPU can handle.

- Reduced Memory Footprint: Using lower precision formats means less memory is required for model weights and activations, enabling larger models to fit into GPU memory or run with fewer GPUs.

Second-Generation Transformer Engine: Building on the Hopper architecture, Blackwell’s Transformer Engine now fully leverages these new micro-tensor scaling and dynamic range management algorithms. This allows for dynamic adjustment of precision during training and inference, ensuring accuracy while maximizing performance.

3. Enhanced Memory Architecture

- Higher Bandwidth Memory (HBM3e): Blackwell GPUs integrate the latest HBM3e memory, offering a substantial leap in capacity and bandwidth. A B200 can have up to 192 GB of VRAM (a 50% increase over H100) and an aggregate memory bandwidth soaring to 8 TB/s (2.4x the H100’s bandwidth).

- Addressing the “Memory Wall”: The increased memory capacity and bandwidth are crucial for handling the ever-growing size of LLMs and generative AI models, where memory capacity often becomes a bottleneck during training and inference. This ensures that the powerful compute units are constantly fed with data, preventing idle cycles

4. Scalability and Interconnect Improvements

- Fifth-Generation NVLink: Blackwell features an advanced fifth-generation NVLink, providing a remarkable 1.8 TB/s bidirectional throughput per GPU. This ultra-fast interconnect is essential for seamless communication between multiple GPUs, enabling the scaling of AI workloads across massive clusters.

- NVLink Switch: The GB200 NVL72 system leverages NVLink Switch chips to connect up to 72 Blackwell GPUs in a single NVLink domain, creating a “superchip” with 1.4 exaflops of AI performance and 30 TB of fast memory. This unified memory space and coordinated workload distribution significantly reduce communication overhead in distributed training.

- Efficient Distributed Training: The enhanced NVLink and NVLink Switch technologies enable more efficient distributed training of multi-trillion-parameter models by ensuring high-speed, low-latency communication across hundreds or even thousands of GPUs.

5. Specialized Accelerators and Engines

- Decompression Engine: A dedicated decompression engine supports the latest data formats, accelerating database queries and data analytics workloads. This is crucial for rapidly loading and processing the massive datasets required for AI training.

- RAS Engine (Reliability, Availability, Serviceability): Blackwell-powered GPUs include a dedicated engine for reliability, availability, and serviceability. It incorporates AI-based preventative maintenance to run diagnostics and forecast reliability issues, maximizing system uptime and ensuring uninterrupted operation for large-scale AI deployments.

- Hardware and Software Co-optimization: NVIDIA designs Blackwell with a tightly integrated hardware and software stack. Frameworks like TensorRT-LLM and NeMo Megatron are optimized to fully leverage Blackwell’s architectural innovations, ensuring that developers can easily access and utilize its full potential. The open-source Triton compiler also plays a key role in exposing Blackwell’s advanced features through an intuitive programming model.

Real-World Impact

The cumulative effect of these improvements is a dramatic increase in AI and deep learning performance:

- Faster LLM Training: Blackwell can deliver up to 2x performance improvement per GPU for LLM pre-training (e.g., GPT-3 175B) and fine-tuning (e.g., Llama 2 70B). At a system level, configurations like the GB200 NVL72 can achieve 2.2x faster training for models like Llama 3.1 405B compared to Hopper.

- Accelerated LLM Inference: For real-time inference on massive models like a 1.8 trillion-parameter GPT-MoE, a Blackwell GPU can achieve up to 15x higher inference throughput compared to an H100. System-level improvements can reach up to 30x.

- Reduced Cost and Energy Consumption: By dramatically increasing performance and efficiency, Blackwell can reduce the cost and energy consumption of running large AI workloads by up to 25x compared to its predecessor.

In essence, NVIDIA’s Blackwell GPUs are not just faster, but are fundamentally redesigned to handle the scale and complexity of the next generation of AI models, particularly generative AI and LLMs, making it possible to train and deploy these models more efficiently and at a much larger scale than ever before.